BACK TO ALL

BACK TO ALL

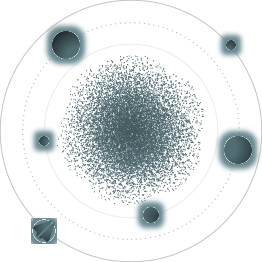

This is the fifth article from the “A Scanning Dream” series. Here we will discuss the light field approach. The light field in general can be described as light flow from all directions in all points of a 3D space in a defined time interval. Geometrically, the light flow is described as a set of rays parametrized by time, position, and orientation in space. Any set of one or more ordinary photos is a subset of the light field and vice versa - you can display light field parts as ordinary photos.

How it works

It’s more practical to discuss the structured light field approach. A structured light field can be captured by using a fixed array of ordinary cameras or custom light field cameras with multiple lenses that focus light on a common matrix. Known optical parameters of each lens and the fixed distance allow capturing depth information for each pixel in each frame. The following video describes the light field approach well enough in the context of experimental renderers.

There are some modern experimental methods that mitigate the fixed camera array requirements by allowing small rotations and biases. It makes it possible to use the light field approach on smartphones - the user will be required to follow the shooting plane provided by the software. But as of August 2020 there are no smartphones with this technology available, and we think this approach would be overkill for a 3d reconstruction.

The light field approach can be used as a base for a new full 3D reconstruction method; for instance, it was done in 3D Reconstruction and Rendering from High Resolution Light Fields. Also, there is The light field 3D scanner which describes a hybrid approach of combining Structure From Motion with light fields to increase quality compared to the photogrammetric method. But all of them are just research, far removed from actual industry use.

A light field usage example that is closest to industry use is this magnificent Google experiment. Google reconstructed several places in 3D by using a custom light field array camera rig. They built a VR app from the captured data and published it in Steam/SteamVR. The app immerses you in a photorealistic atmosphere of a place but it significantly differs from the ordinary 360 degrees VR. You are able to walk around with 6 degrees of freedom here while in the ordinary 360 degrees VR you can only rotate your head. But unfortunately, it is limited to several square meters and isn’t a full 3D reconstruction. Anyway, it was great and led to a unique Google side project Seurat which we will discuss in the article “A Scanning Dream: How to use the scanned scene or object”.

What is required

These are the most known standalone light field devices: Raytrix, Lytro bought by Google. They provide a parallax effect with the ability to change focus at any point in the frame. They can also be used for metrics tasks, to evaluate the quality of various mechanisms.

There are no smartphones capable of using the light field technology, but there is at least one patent which describes a 4x4 camera array smartphone from LG. Also a few years ago there was a company called “Pelican Imaging” that produced similar mobile camera arrays modules but it was acquired with all patents without any known developments to these days.

Limitations and Conclusion

Despite the long history of the light field array approach it is known mostly from academic research and experiments. Besides the unavailability of affordable light field hardware, there is also a problem of storing large amounts of photos which are usually produced by light field cameras. But this issue can potentially be resolved by exploiting the high density of the captured frames. The photos captured with nonsignificant bias share a lot of pixels and can be effectively compressed.

The most significant difference from ToF and structured light systems is that light field array systems are passive. Their higher resolution potentially makes it possible to reconstruct objects with any size at any distance limited only by lens parameters. Also, the light field approach potentially can be used for objects with transparent, reflective, and plain surfaces which is impossible with other methods. But of course, the light field approach suffers from bad light conditions like any other passive optical method.

In the last article “A Scanning Dream: how to use the scanned object” we will discuss how to actually use the reconstructed object or scene in production and how close we are to the dream described in the first article.