BACK TO ALL

BACK TO ALL

Designing and building mobile applications is not a simple task at all. Whichever app you would be creating, you would face numerous problems in the development process and even after the app’s release. However, the situation is even more complicated when it comes to handling personal information. Engineers who develop such apps must possess not just expertise in programming but also some knowledge of those laws that guide data processing. In the healthcare industry, information that can compromise one’s anonymity is regulated by HIPAA.

Explaining hipaa: what is this standard and where is it applied?

This abbreviation refers to the Health Insurance Portability and Accountability Act. It was passed by the Congress back in 1996 to secure so-called PHI (or Personal Health Information) from unlawful exposure to the public. We will see which data is viewed as PHI later in the article; right now, let’s concentrate on the HIPAA’s influence on app developers.

As you may have guessed, the impact of HIPAA is quite significant. For those of our readers who don’t remember what the world was like back in the days, we’ll describe the main signs of that period. So, the year 1996 was remarkable for the introduction of Java 1.0, the beginning of Google’s search engine development, and Steve Job’s return to Apple. The most popular cell phone of the time, Motorola StarTac, carried some impressive technical innovations – it could send text messages. Obviously, there was no such thing as a “mobile app,” and the word “smartphone” was yet to appear. The world was slightly different from what we know today, to say the least!

However, it’s not like HIPAA hasn’t evolved with time. The most recent updates to the Act were issued in 2013, 2016, and 2019. It’s worth mentioning that these updates were not aimed at changing the Act’s nature; they introduced new fines and recommendations from the Department of Health and Human Services.

HIPAA created many controversies, as it’s an imperfect solution. It increases development and healthcare expenditures for both individuals and businesses. It also involves some unpleasant limitations for doctors and patients. However, without HIPAA, our healthcare system would fall into chaos because information of millions of people would be exposed to the public.

Terms you need to know before creating hipaa compliant applications

We have already covered the meaning of HIPAA itself, so it’s time to review ancillary terms, which you must know to be able to build an app that follows the standard. The list of most frequently seen terms includes:

- Covered Entities (CEs). Essentially, these are any healthcare facilities that handle patients’ personal data. Mind that the list is not limited to individual doctors and hospitals; CEs also include pharmacies, nursing homes, and even health insurance companies. Governmental healthcare programs are also viewed as Covered Entities and obliged to guarantee HIPAA compliance. Medicare and Medicaid, for instance, fall under this category.

- Business Associates (BAs). All entities that belong to this category do not provide treating services directly but, rather, offer assistance to healthcare facilities. That’s right, app developers who are creating an application for a hospital (the app has to be handling personal information, mind you) will be considered Business Associates and responsible for guaranteeing HIPAA compliance.

- Business Associate Agreements (BAA). BAAs are designed to ensure that both a CE and a BA signing them are capable of following the HIPAA regulations. Business associates that sign such an agreement are obliged to comply with the HIPAA rules and will be considered fully responsible for violating the laws.

- Protected Health Information (PHI). This is probably the most complicated and obscure term of all. By definition, PHI should refer to the information about a person’s health condition that can reveal his or her identity. It is either collected or produced by CEs and BAs and inevitably involves some data about the health of a person

Mind that within this article, we are providing a very broad description of the terms, which is totally insufficient for an in-depth understanding of the matter. If you want to reveal every single detail about developing HIPAA compliant applications, please review official guides by HHS and other governmental institutions.

Which apps have to be HIPAA compliant?

If you are creating an app for a medical facility, the Act will inevitably be the first thing to consider (in fact, it should be discussed with all involved employees even before you start developing the app). But which apps are required to be HIPAA compliant? The answer is not as simple as it may seem.

Consider the following example: Apple Health is measuring your pulse (presuming that you have an Apple Watch, of course) and the number of steps you make per day. Should the app be considered as collecting PHI? The law clearly states: no, it shouldn’t. Even though your iPhone knows that you are you, has your home address and access to your personal information, up to the 3D model of your face and fingerprints (depending on the model), Apple Health doesn’t have to be HIPAA compliant. Pulse and steps do not reveal your identity, meaning that compliance with the Act is not required for an app that collects this information.

A similar rule applies to most fitness apps, as they don’t collect any PHI. However, medical applications that are aimed at gathering personal information are a totally different thing. Most apps that are designed to be used by doctors, nurses, and, of course, their patients must comply with the HIPAA rules. Overall, to clearly understand which app is required to be HIPAA compliant, it is strongly advised to consult a legal counselor and conduct an in-depth evaluation of the matter.

To further understand how HIPAA works, consider the following example: your company is developing an app for a hospital. Its functions would include monitoring the health of patients, and, of course, their personal information. For our example, other functionality doesn’t really matter. If your company is providing such an app, you will be considered a Business Associate and will be held completely accountable for violating the HIPAA rules. Releasing a HIPAA-compliant app inevitably involves addressing numerous legal and technical issues; so, the major digital distribution platforms have designed dedicated sets of guidelines for developers. For instance, Apple provides a comprehensive instruction covering the use of its HealthKit by third-party apps. Make sure to consult all credible sources you can find before starting developing a HIPAA-compliant application to avoid any contradictions with the law.

HIPAA compliance and privacy issues: which information is classified as private?

As we have already seen, data collected by a generally available app, which is not intended to be used by a single healthcare facility, is not considered to be PHI. We also know that even information that has nothing to do with health, such as date of birth or zip code, can be classified as PHI under certain circumstances. Information about one’s genes and family, for instance, is a part of PHI only when it is collected by a CE or a BA. If it is gathered through public research, such data will not be protected by the law.

How do you handle data storage in HIPAA-compliant applications?

After you have clearly defined that an app you are building has to be HIPAA compliant, it’s time to actually get to the practical side. How can developers protect sensitive information about patients? Obviously, such an app would require more serious precautions than a typical project (which, by the way, can increase development costs).

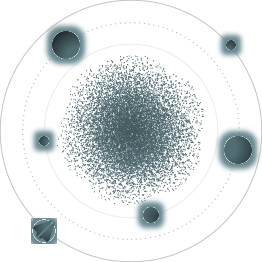

The first step on the way to providing proper data management to your clients is defining in what form the information will be stored (and transferred). You’ll need to define when the PHI will be encrypted, when it will be stored without additional protection, and how to transfer it between devices and in the backend. Mind that encryption on every stage of data processing is not really necessary, as it would only raise the maintenance costs and make the development of your app more complicated. Overall, the customers’ information commonly goes through the following 4 stages: The first stage of data processing involves storing information on a device while directly interacting with it. This includes making any changes to it or just reviewing it without performing any actions. Applying any encryption methods at this stage is not suggested.

The second step involves protecting the data while it is not in use but still stored in the memory of the device. This requirement often becomes a reason for security violations since developers use a library to save the data on a device while the latter is not connected to the Internet. If the information is not encrypted during this process, you may end up violating the HIPAA compliance regulations, which may result in a significant fine. If you are designing an app for IOS devices, make sure to utilize the Security Enclave. It allows you to store the encryption keys in a highly secured place.

The third step of data processing includes a transition from the device to a server. Ideally, you should use the TLS protocol along with the most recent cipher suites on this stage. In addition, developers are encouraged to include certificate pinning into their apps. It is essential if you know that your clients will be connecting to the server through an unprotected network (and this happens more frequently than you think it should). However, certificate pinning is never an odd thing in app development, so consider using it even if you know that your clients won’t be utilizing any unprotected networks.

Thought that we are done with the device-server transition? Not at all! To guarantee HIPAA compliance, developers must utilize hostname validation. It is an essential part of the process and cannot be overlooked under any circumstances. Hostname validation protects your data from man-in-the-middle attacks.

Finally, the last step of securing your clients’ data is protecting the servers where it is stored. To receive a HIPAA compliance certificate, you need to ensure that your servers are secured from countless threats:

- hacker attacks, including ransomware attacks and session hijacking;

- natural threats, such as earthquakes, fires, or floods, which requires proper backups to use in case of emergency;

- physical access by unauthorized individuals to a facility with your servers, which, as a consequence, must have 24/7 security.

In addition, developers are obliged to create a proper key management system, guarantee protected key rotation, encryption, and audit logging. Having an encrypted backup is also a good idea along with securing the servers from physical access by unauthorized individuals.

Don’t want to create a comprehensive security system to store and process PHI? Well, there might be a solution for you since the Office for Civil Rights allows companies to use de-identification to guarantee HIPAA compliance. This topic definitely deserves a separate article, as it is a very complicated field.

Cloud storages and HIPAA compliance

The first question that arises after you receive a list of requirements you have to fulfill for achieving HIPAA compliance is probably: “Can I use my cloud storage and still be compliant?” Well, that depends significantly on who your cloud storage provider is. Some of the latter have established protected servers, which are almost completely satisfying the HIPAA rules.

Storing data on such servers may be quite beneficial, as they have already fulfilled a number of safety requirements, meaning that you can save some time, efforts, and funds. However, not all cloud servers are like this; if yours aren’t HIPAA compliant, you will need to switch to another provider if you really want to finish that mobile app.

What penalties can be applied to applications violating HIPAA?

The simplest explanation we could come up with is as follows: the higher the responsibility, the higher fines would be applied in case you violate the rules. HIPAA compliance certification imposes some very strict regulations, and the consequences for not following them will be severe.

As we already know, any organization that provides software to a healthcare facility is viewed as a Business Associate by the law. That’s why your development team will be held accountable if you violate the regulations. By saying “accountable” we actually mean fined. How significantly? The smallest possible fine applied to BAs is set at $100 as of 2020. Mind that the fine itself depends on the nature of the violation. If it happened due to negligence, the penalty will be less severe, but willful violations may result in fines of up to $50.000. It’s worth noting that each violation type has a maximum annual penalty – $1.500.000. That’s 3 million dollars altogether if you decide to go against every possible law.

Theoretically, those numbers are perfectly accurate and applicable to every other case. In reality, however, regulators have all legal permissions to determine fines for each case in a way they consider needed. The regulators are responsible for performing a thorough evaluation of every violation, assessing its consequences, and determining the terms of the breach. Let’s consider the following example:

- A software development company has violated the safety requirements and exposed the information of 100 patients to the public.

- Regulators discover that the violation was willful and set the fine at $5.000 per patient.

- We can see that the company will pay a $500.000 compensation for failing to provide full HIPAA compliance.

Mind that imposing penalties in every other case is not a necessity. After performing a thorough evaluation of the matter, the regulators may decide that the company only needs a corrective action plan to become completely HIPAA compliant. This approach would allow avoiding any fines and coming up with a mutually beneficial solution.

Real-world example of handling a HIPAA violation

This settlement case is one of the most recent ones (as of 2020), and it provides a great way to overview the consequences of violating the HIPAA compliance rules. So, a Business Associate that was responsible for handling PHI of a hospital’s patients had their mobile device stolen. As a result, the personal information of 412 patients was exposed to the public. In addition, regulators decided that the BA didn’t perform any actions to protect the data and did not have a risk management plan. The BA was charged with a $650.000 penalty due to the absence of proper risk analysis and compromised HIPAA policies. It’s worth noting that this sum wasn’t the maximum possible fine; the current situation in healthcare has impacted the regulators’ decision. Under normal circumstances, the BA may have been charged with a much larger fine, but their service to the population at risk was considered as a mitigating factor.

Reviewing recent updates to HIPAA

As mentioned earlier, the most up-to-date corrections to the Act were made in 2013 and 2019. The Omnibus Rule imposed in 2013 was the last major update, which provided the Office for Civil Rights with more credentials. The year 2016 is of particular interest to us since it is when the government introduced stricter regulations of BA – CE relationships. The Department of Health and Human Services levied multiple fines that year, mostly due to the absence of proper Business Associate Agreements between CEs and BAs. The most interesting thing about this case is an increased number of accused and fined Business Associates, which had rarely happened before 2016.

2016 was also remarkable for introducing a joint HHS – FTC guidance. On October 21st that year, these two entities provided a comprehensive guide for developers creating HIPAA compliant applications. The document was designed to remind programmers about the responsibilities they carry, particularly, about the need to protect patients’ PHI by all means possible. Since that date, developers were obliged to comply with both the Federal Trade Commission Act and HIPAA rules. What is the role of the FTC in this venture? Well, the Commission regulates all policies that directly face customers. This means that in accordance with the new rule, all apps that guarantee HIPAA compliance are obliged to provide clear and honest privacy policies for users.

HIPAA compliance and your mobile app: facts you should know before developing the app

First and foremost, before you even start developing the software, it’s essential to remember that HIPAA compliant applications protect customer data much more carefully than any other type of consumer software (maybe except for banking applications, but that’s a different topic). Any violation of the HIPAA rules will be considered the developers’ responsibility and result in unpleasant consequences. Keeping that in mind, it’s time to evaluate practical aspects of the development process. Consider the following factors:

- Who will be using the app to enter and modify the PHI? If the app will be used by a patient only, ways to encrypt the data are quite clear. But what if that patient is unconscious/unable to enter his or her PHI? In this case, medical personnel will need to be provided with access to the app, which introduces lots of new challenges for the developers.

- What types of data will be stored? Remember that not all information is viewed as PHI by the law. This is exactly why seeking assistance from a legal counselor while developing such apps is strongly advised.

- Where will the patients’ information be entered? Let’s assume that we can guarantee that the patients’ information will be entered in a medical facility, say, at a registration desk. If the user will be registering in front of their doctor, everything seems to be fine. But what if we allow the patient to register at home? In this case, we don’t have any methods to check if the information they submit is true. In fact, we don’t even know how to verify their identity. What if the PHI will be entered by a relative without a patient’s consent? This will be considered a violation of HIPAA (though sharing PHI with family members under a patient’s written permission is allowed). You’ll need to consider all these things while developing apps to follow the standard, so make sure to pay attention even to seemingly insignificant details.

Prerequisites for creating HIPAA compliant programs

If you are developing apps of this type, there is a whole bunch of details to consider. First, you would need a team of experienced engineers. In a perfect situation, they would already possess some experience with making highly secured and encrypted apps. In reality, however, their expertise may not be sufficient to create a perfect product. A project manager would need to oversee every other aspect of the app development process and to introduce corrections and amendments when needed.

After forming a team of developers to work on your future app, you will need to come up with a way to handle patients’ PHI. This includes reaching out to legal counselors to discover which information should be viewed as PHI, defining which technologies will be used for information encryption, and creating a comprehensive risk management strategy. At this stage, you will need to evaluate your servers (or those of your cloud storage provider) to define whether they are HIPAA compliant.

While developing the app itself, you’ll need to consider multiple threats, which can compromise your customers’ data, and ensure that your app is secured from all of them. For instance, it’s a widely applied practice to make HIPAA compliant applications invisible to other programs installed on a device. This way, you are creating a safer environment for the data by ensuring that other apps on a user’s device don’t even know that your app exists.

After the app is completed, it’s time to perform thorough testing and quality evaluation. Mind that your own resources will be used along with the ones of independent researchers. An unbiased security audit is always performed by authorities when it comes to testing such apps so that the utmost level of safety can be guaranteed. All the applications that handle PHI are required to go through a third-party audit procedure; so, make sure to prepare for it in advance.